"transforms" : "addTimestampToTopic",

"transforms.addTimestampToTopic.type" : "org.apache.kafka.connect.transforms.TimestampRouter",

"transforms.addTimestampToTopic.topic.format" : "${topic}_${timestamp}",

"transforms.addTimestampToTopic.timestamp.format": "YYYY-MM-dd"🎄 Twelve Days of SMT 🎄 - Day 11: Predicate and Filter

Apache Kafka 2.6 included KIP-585 which adds support for defining predicates against which transforms are conditionally executed, as well as a Filter Single Message Transform to drop messages - which in combination means that you can conditionally drop messages.

As part of Apache Kafka, Kafka Connect ships with pre-built Single Message Transforms and Predicates, but you can also write you own. The API for each is documented: Transformation / Predicate. The predicates that ship with Apache Kafka are:

-

RecordIsTombstone- The value part of the message is null (denoting a tombstone message) -

HasHeaderKey- Matches if a header exists with the name given -

TopicNameMatches- Matches based on topic

🎄 Twelve Days of SMT 🎄 - Day 10: ReplaceField

The ReplaceField Single Message Transform has three modes of operation on fields of data passing through Kafka Connect:

-

Include only the fields specified in the list (

whitelist) -

Include all fields except the ones specified (

blacklist) -

Rename field(s) (

renames)

Scheduling Hugo Builds on GitHub pages with GitHub Actions

Over the years I’ve used various blogging platforms; after a brief dalliance with Blogger I started for real with the near-inevitable Wordpress.com. From there I decided it would be fun to self-host using Ghost, and then almost exactly two years ago to the day decided it definitely was not fun to spend time patching and upgrading my blog platform instead of writing blog articles, so headed over to my current platform of choice: Hugo hosted on GitHub pages. This has worked extremely well for me during that time, doing everything I want from it until recently.

🎄 Twelve Days of SMT 🎄 - Day 9: Cast

The Cast Single Message Transform lets you change the data type of fields in a Kafka message, supporting numerics, string, and boolean.

🎄 Twelve Days of SMT 🎄 - Day 8: TimestampConverter

The TimestampConverter Single Message Transform lets you work with timestamp fields in Kafka messages. You can convert a string into a native Timestamp type (or Date or Time), as well as Unix epoch - and the same in reverse too.

This is really useful to make sure that data ingested into Kafka is correctly stored as a Timestamp (if it is one), and also enables you to write a Timestamp out to a sink connector in a string format that you choose.

🎄 Twelve Days of SMT 🎄 - Day 7: TimestampRouter

Just like the RegExRouter, the TimeStampRouter can be used to modify the topic name of messages as they pass through Kafka Connect. Since the topic name is usually the basis for the naming of the object to which messages are written in a sink connector, this is a great way to achieve time-based partitioning of those objects if required. For example, instead of streaming messages from Kafka to an Elasticsearch index called cars, they can be routed to monthly indices e.g. cars_2020-10, cars_2020-11, cars_2020-12, etc.

The TimeStampRouter takes two arguments; the format of the final topic name to generate, and the format of the timestamp to put in the topic name (based on SimpleDateFormat).

🎄 Twelve Days of SMT 🎄 - Day 6: InsertField II

We kicked off this series by seeing on day 1 how to use InsertField to add in the timestamp to a message passing through the Kafka Connect sink connector. Today we’ll see how to use the same Single Message Transform to add in a static field value, as well as the name of the Kafka topic, partition, and offset from which the message has been read.

"transforms" : "insertStaticField1",

"transforms.insertStaticField1.type" : "org.apache.kafka.connect.transforms.InsertField$Value",

"transforms.insertStaticField1.static.field": "sourceSystem",

"transforms.insertStaticField1.static.value": "NeverGonna"🎄 Twelve Days of SMT 🎄 - Day 5: MaskField

If you want to mask fields of data as you ingest from a source into Kafka, or write to a sink from Kafka with Kafka Connect, the MaskField Single Message Transform is perfect for you. It retains the fields whilst replacing its value.

To use the Single Message Transform you specify the field to mask, and its replacement value. To mask the contents of a field called cc_num you would use:

"transforms" : "maskCC",

"transforms.maskCC.type" : "org.apache.kafka.connect.transforms.MaskField$Value",

"transforms.maskCC.fields" : "cc_num",

"transforms.maskCC.replacement" : "****-****-****-****"🎄 Twelve Days of SMT 🎄 - Day 4: RegExRouter

If you want to change the topic name to which a source connector writes, or object name that’s created on a target by a sink connector, the RegExRouter is exactly what you need.

To use the Single Message Transform you specify the pattern in the topic name to match, and its replacement. To drop a prefix of test- from a topic you would use:

"transforms" : "dropTopicPrefix",

"transforms.dropTopicPrefix.type" : "org.apache.kafka.connect.transforms.RegexRouter",

"transforms.dropTopicPrefix.regex" : "test-(.*)",

"transforms.dropTopicPrefix.replacement" : "$1"🎄 Twelve Days of SMT 🎄 - Day 3: Flatten

The Flatten Single Message Transform (SMT) is useful when you need to collapse a nested message down to a flat structure.

To use the Single Message Transform you only need to reference it; there’s no additional configuration required:

"transforms" : "flatten",

"transforms.flatten.type" : "org.apache.kafka.connect.transforms.Flatten$Value"🎄 Twelve Days of SMT 🎄 - Day 2: ValueToKey and ExtractField

Setting the key of a Kafka message is important as it ensures correct logical processing when consumed across multiple partitions, as well as being a requirement when joining to messages in other topics. When using Kafka Connect the connector may already set the key, which is great. If not, you can use these two Single Message Transforms (SMT) to set it as part of the pipeline based on a field in the value part of the message.

To use the ValueToKey Single Message Transform specify the name of the field (id) that you want to copy from the value to the key:

"transforms" : "copyIdToKey",

"transforms.copyIdToKey.type" : "org.apache.kafka.connect.transforms.ValueToKey",

"transforms.copyIdToKey.fields" : "id",🎄 Twelve Days of SMT 🎄 - Day 1: InsertField (timestamp)

You can use the InsertField Single Message Transform (SMT) to add the message timestamp into each message that Kafka Connect sends to a sink.

To use the Single Message Transform specify the name of the field (timestamp.field) that you want to add to hold the message timestamp:

"transforms" : "insertTS",

"transforms.insertTS.type" : "org.apache.kafka.connect.transforms.InsertField$Value",

"transforms.insertTS.timestamp.field": "messageTS"Life as a Developer Advocate, nine months into a pandemic

My Workstation - 2020

Is a blog even a blog nowadays if it doesn’t include a "Here is my home office setup"?

Thanks to conferences all being online, and thus my talks being delivered from my study—and my habit of posting a #SpeakerSelfie each time I do a conference talk—I often get questions about my setup. Plus, I’m kinda pleased with it so I want to show it off too ;-)

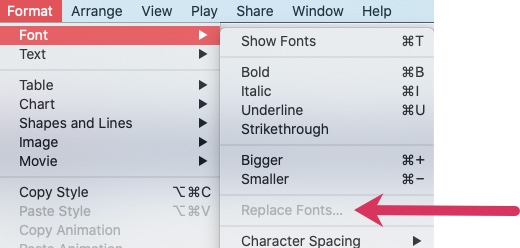

Keynote - Why is Replace Fonts greyed out?

Very short & sweet this post, but Google turned up nothing when I was stuck so hopefully I’ll save someone else some head scratching by sharing this.

Kafka Connect, ksqlDB, and Kafka Tombstone messages

As you may already realise, Kafka is not just a fancy message bus, or a pipe for big data. It’s an event streaming platform! If this is news to you, I’ll wait here whilst you read this or watch this…

Streaming Geopoint data from Kafka to Elasticsearch

Streaming data from Kafka to Elasticsearch is easy with Kafka Connect - you can see how in this tutorial and video.

One of the things that sometimes causes issues though is how to get location data correctly indexed into Elasticsearch as geo_point fields to enable all that lovely location analysis. Unlike data types like dates and numerics, Elasticsearch’s Dynamic Field Mapping won’t automagically pick up geo_point data, and so you have to do two things:

ksqlDB - How to model a variable number of fields in a nested value (STRUCT)

There was a good question on StackOverflow recently in which someone was struggling to find the appropriate ksqlDB DDL to model a source topic in which there was a variable number of fields in a STRUCT.

Streaming XML messages from IBM MQ into Kafka into MongoDB

Let’s imagine we have XML data on a queue in IBM MQ, and we want to ingest it into Kafka to then use downstream, perhaps in an application or maybe to stream to a NoSQL store like MongoDB.